How AI Music Models Are Trained: Neural Nets, Diffusion, and Why Your Prompts Work

You type “melancholic lo-fi piano, 75 BPM, late night study session” and a few seconds later you have a full track. But what actually happened between those two things?

AI music generation isn’t magic — it’s a specific chain of mathematical processes running on learned patterns extracted from enormous amounts of music. Understanding how that chain works makes you a dramatically better prompt writer, and gives you a genuine mental model for why some prompts land and others produce mush.

This article covers the full picture: how training data is prepared, what neural networks actually learn from audio, why diffusion models produce better results than older approaches, and how your text prompt gets translated into sound.

Step 1: What the Model Actually “Sees”

AI music models don’t process audio the way you hear it — as a continuous wave of pressure changes. Raw audio waveforms are too high-dimensional and too temporally dense to learn from directly. A single second of 48kHz audio is 48,000 individual data points. A three-minute song is 8.6 million.

Instead, audio gets converted into a spectrogram: a 2D image where the x-axis is time, the y-axis is frequency, and the brightness at each point represents how loud that frequency is at that moment. Suddenly, a waveform becomes a picture. And neural networks are exceptionally good at learning patterns in pictures.

The most useful spectrogram variant for music is the mel spectrogram, which compresses the frequency axis to match human hearing (we’re more sensitive to differences at low frequencies than high ones). This gives the model a representation that’s already tuned to how music is perceived.

Step 2: The Training Pipeline

Training a music model requires millions of audio clips, all processed into spectrograms or compressed into a latent space — a compact numerical representation that captures the essential features of a sound without storing every data point.

Here’s the broad pipeline:

- Data collection — millions of tracks across genres, tempos, keys, and styles

- Preprocessing — convert to mel spectrograms or encode into latent representations using a separate encoder model (a VAE, or Variational Autoencoder)

- Text pairing — each audio clip gets associated with text: genre tags, mood labels, instrumentation metadata, sometimes actual human descriptions

- Training — the model learns to predict what audio corresponds to what text, and what audio typically follows what audio

The text-audio pairing is the critical piece. This is what makes the model “know” that the word “melancholic” correlates with minor keys, slower tempos, and certain instrument timbres. It’s not programmed — it’s learned statistically from the patterns in labeled data.

Step 3: What Transformers Learn From Music

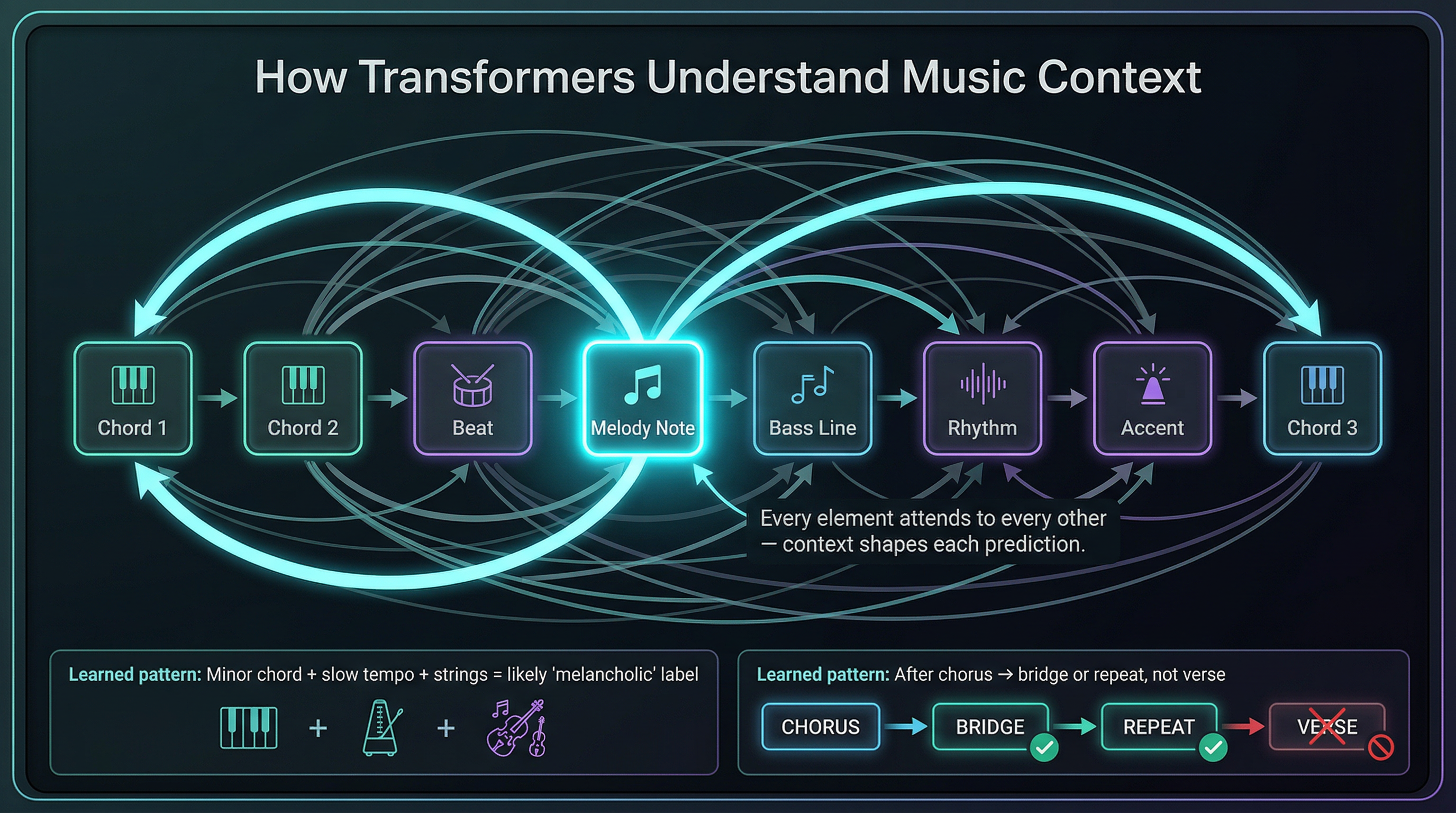

The architecture underlying most modern AI music models — and language models, and image models — is the transformer. Originally designed for text, transformers turned out to be powerful for any sequential data where context matters.

Music is deeply contextual. The note on beat 3 of bar 4 doesn’t exist independently — it exists in relation to what came before it harmonically, rhythmically, and dynamically. Transformers capture this through a mechanism called self-attention: every element in a sequence learns to “look at” every other element and weight its influence.

In practice, a transformer trained on music learns things like:

- After a I chord, a IV or V chord is statistically likely

- After a verse, a chorus is likely

- At 130 BPM in a major key with driving drums, a drop is probable

- A “melancholic” label predicts minor keys, descending melodic lines, and dynamics that swell and resolve

None of this is written as rules. The model extracts these patterns by processing enough examples that the statistical regularities become encoded in billions of numerical weights.

Step 4: Diffusion — Why Modern Models Sound Better

Older generative approaches (like GANs — Generative Adversarial Networks) had a tendency to produce artifacts, mode collapse (repetitive outputs), and instability during training. The current generation of music models, including Google’s Lyria 3 which powers Studio AI, uses diffusion.

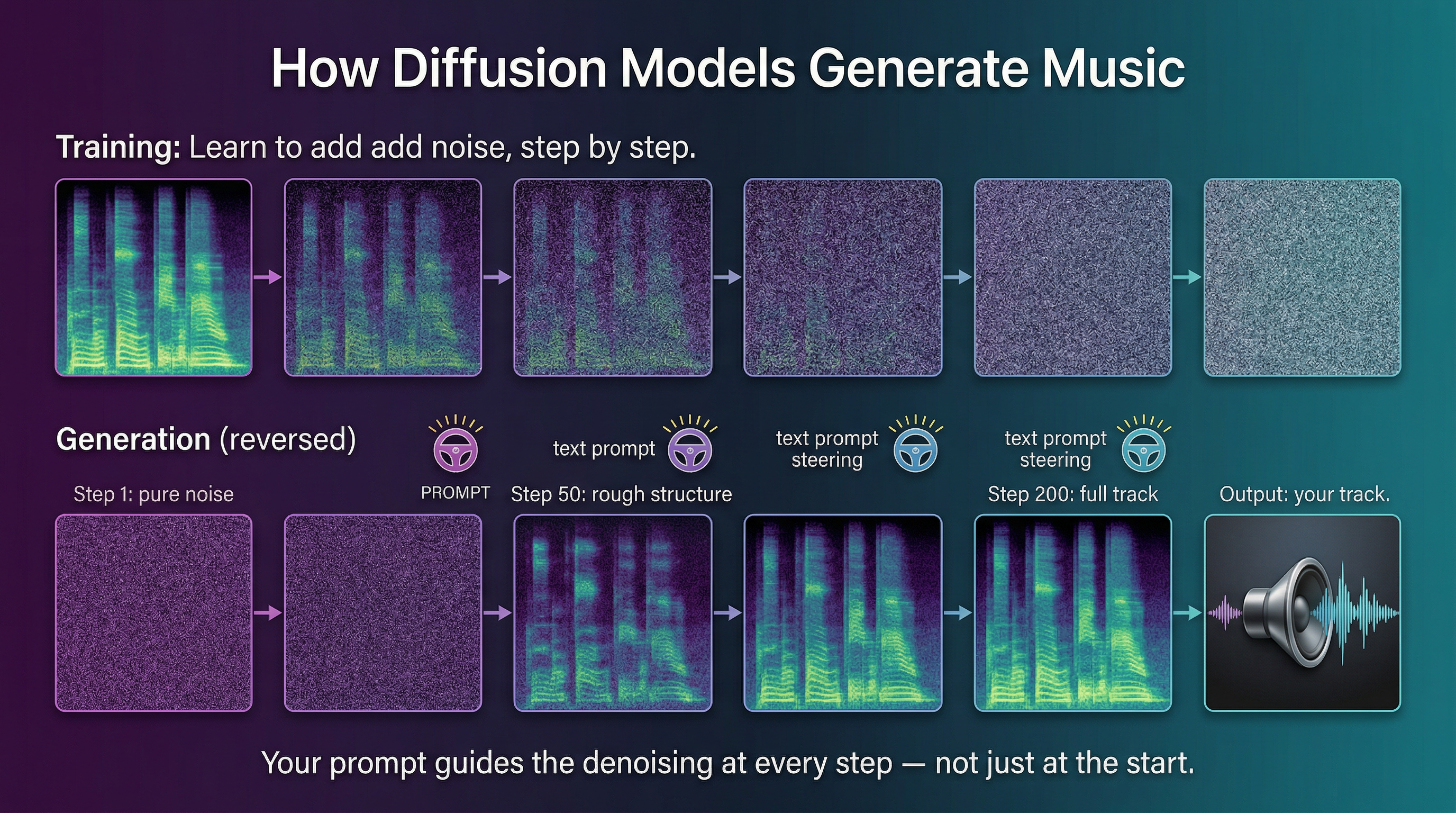

The concept is counterintuitive but elegant:

- Take a real audio spectrogram

- Gradually add random noise to it, step by step, until it’s pure static

- Train a neural network to learn the reverse: given a noisy spectrogram, predict what the slightly-less-noisy version looks like

- Repeat until the network can denoise all the way back to a clean spectrogram

At generation time, you start with pure random noise and run the denoising process repeatedly — but you steer it with your text prompt at each step. The model gradually resolves the noise into something that matches your description.

This is why diffusion models produce more coherent, higher-fidelity audio than previous approaches. They’re not generating the output in one shot — they’re iteratively refining it, guided by the prompt at every step.

Step 5: How Your Text Prompt Becomes Music

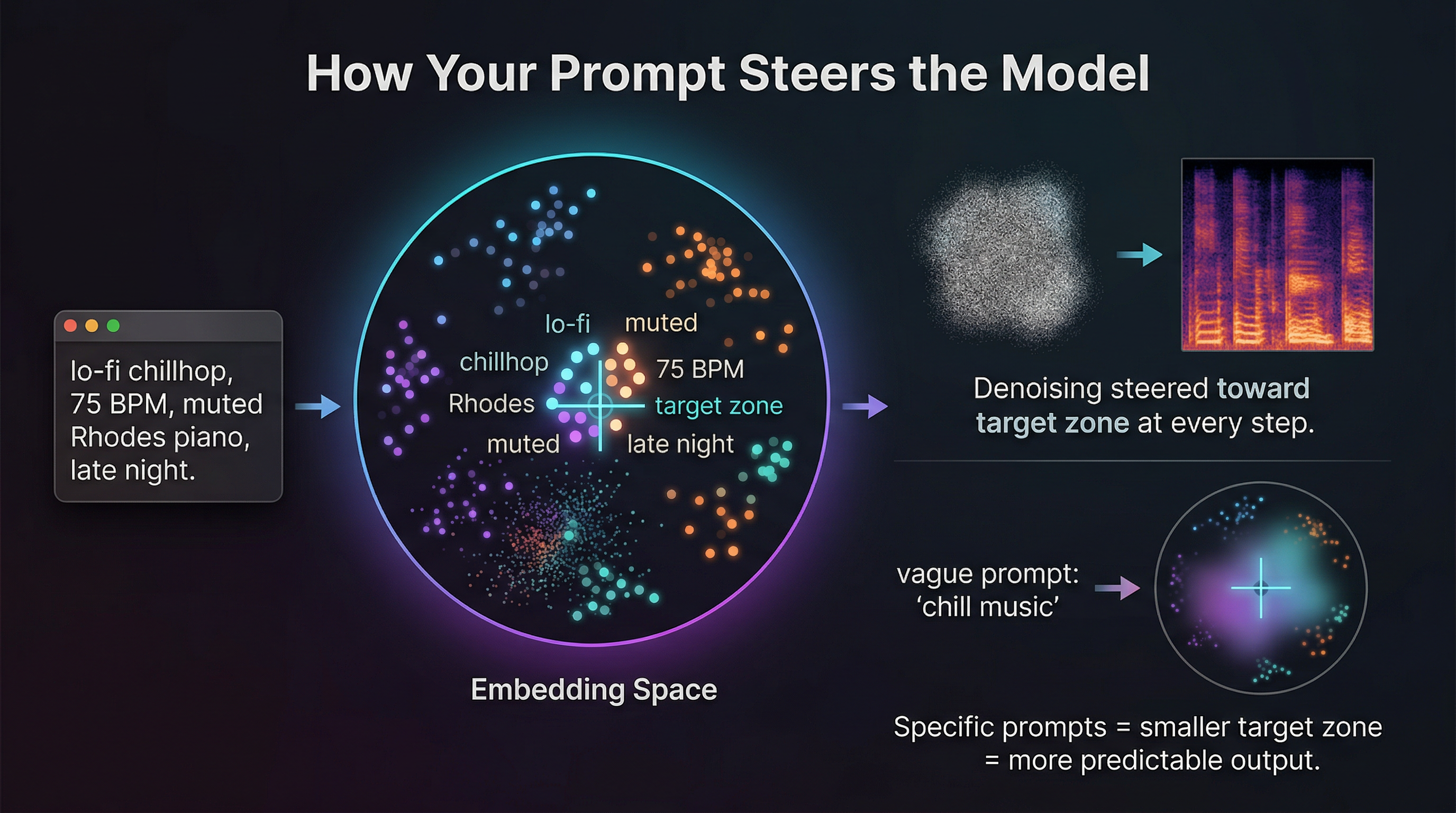

When you type a prompt, the model doesn’t read English the way you do. Your text gets converted into a sequence of tokens (roughly: word fragments), which are then embedded as vectors — lists of numbers — in a high-dimensional space where related concepts sit near each other.

“Lo-fi” and “chill” sit near each other. “Aggressive” and “distorted” sit near each other. “Minor key” and “melancholic” sit near each other. The model has learned these relationships from the text-audio pairs in its training data.

These text embeddings are then used to condition the diffusion process — they act as a steering signal at every denoising step, pulling the output toward audio that matches the described characteristics.

This is exactly why specificity matters so much in prompting:

- “chill music” — the text embedding is in a vague region of the space that overlaps with too many genres

- “lo-fi chillhop, 75 BPM, warm vinyl crackle, muted Rhodes piano, late-night atmosphere” — each additional term narrows the embedding into a more specific region, and the denoising process has a clearer target

More specific prompts don’t just help the model — they constrain the probability distribution the model is sampling from. Vague prompts sample from a broad distribution, which is why the output can feel generic or unfocused.

What This Means for Your Prompts

Understanding the architecture gives you a few practical principles:

Specificity compounds. Every additional descriptor narrows the target zone in embedding space. Genre + subgenre + tempo + instrumentation + mood is not redundant — each term adds constraint.

The model interpolates, it doesn’t follow rules. You can describe a sound that doesn’t map perfectly to a genre name. “Like a film score but with trap hi-hats” works because both concepts exist in the embedding space and the model can find a region that balances them.

Contradictory prompts produce averaging. “Energetic and melancholic” will be interpreted as an average of both regions rather than a paradox. If you want tension between two qualities, describe it instrumentally: “driving drums under a slow, mournful string melody.”

Structural tokens matter. Words like “intro,” “verse,” “chorus,” “outro,” “build,” “drop” are learned from structural metadata in training data — use them explicitly if you want structural shape.

Use our AI Music Prompt Builder to apply these principles automatically — it formats your inputs into a structured prompt that feeds the Lyria 3 engine with maximum specificity.

You just learned how the model thinks — now make it work for you. Studio AI’s music generator runs on Lyria 3. Describe exactly what you want and hear it back in seconds. Generate a track now →

Frequently Asked Questions

What model powers Studio AI’s music generator? Studio AI uses Lyria 3, Google’s music generation model. It’s a latent diffusion model conditioned on text embeddings — the same architectural family as image generators like Stable Diffusion, adapted for audio.

How much training data does a music model need? Large-scale music models are typically trained on millions of tracks. The quality and diversity of the training data directly affects the model’s range — a model trained heavily on Western pop will struggle with niche genres underrepresented in its training set.

Why do some prompts produce generic results? Vague prompts sample from a broad probability distribution. “Upbeat music” covers thousands of genres and styles, so the model picks the statistical center — which is often the most generic representation. Specific descriptors narrow the distribution to a more intentional region.

Can AI music models understand music theory? Not explicitly — there are no rules programmed in. But they’ve learned music theory implicitly from patterns in the data. The model “knows” that a tension resolves because it’s seen that pattern millions of times, not because it was taught what a tritone is.

Is AI-generated music truly original? It’s a new interpolation of patterns learned from existing music — not a copy of any training example, but not created from nothing either. Legally, this is still being worked out. Practically, the outputs are distinct enough to use as original creative work, and you own what you generate in Studio AI.

Turn What You Know Into Sound

You now understand what most people using AI music tools don’t: the model isn’t guessing — it’s navigating a learned map of sound, steered by your words. The more precisely you describe your target, the closer it lands.

That’s not a feature. That’s physics. Go use it.